Conversations

Immanentizing the Eschaton

Will Arbery and Josh Kline in conversation about artificial intelligence and the political future

Josh Kline, Unemployment, 2016. Installation, dimensions variable. Installation view: Fondazione Sandretto Re Rebaudengo, Turin, 2016–17. Photo: Paolo Saglia. Courtesy the artist and Fondazione Sandretto Re Rebaudengo, Turin

Artist Josh Kline and playwright and screenwriter Will Arbery both live, in a sense, in the future. Kline’s work, described by The New Yorker as “uncomfortably prescient art about the dehumanizing nature of work,” has for more than two decades looked unblinkingly ahead to the most daunting implications of 21st-century life. Arbery’s play Heroes of the Fourth Turning (2019), a Pulitzer finalist, presentsthe frighteningly realistic prospect of millenarian religious warfare in America. At Ursula’s invitation the two recently met for the first time to share their thoughts about the political and cultural ramifications of the emerging artificial intelligence revolution. These are edited excerpts of their conversation.

Randy Kennedy: I thought we might start this conversation by talking about 2024, which is going to be a big year in the U.S., for many reasons that I can’t quite recall at the moment [laughs], but it’s going to be a momentous year. Or we could just dive in and talk about artificial intelligence, which both of you have thought about deeply, from different perspectives. It seems like we’re all perched on the cusp of a revolutionary change that’s about to take place in the economy and in human life because of AI. Josh, you pay more attention to this than all of us do, maybe more than anybody in the art world does, so it would be good to hear from you about what you think is on the horizon.

Josh Kline: America’s unraveling politics are going to have the bigger immediate impact. AI is definitely here, though, and rapidly maturing. My project Unemployment [2016] was a warning about the political consequences of mass unemployment due to automation and AI. When I wasworking on it in 2015, people in the field were predicting that AI would come for middle-class jobs in the 2030s. But that moment is here now. After a few years of working out the kinks with interfaces and tailoring large language models so they can be easily customizable for individuals and businesses, AI is likely to replace a lot of middle-class professional jobs.

Alexandra Vargo: Which professions do you think they’ll start to reach first, in terms of job losses and realignment?

JK: Office work, administrators, secretaries—any job where someone has to process or organize information. A lot of jobs in banking, law and sales are likely to go the way of travel agents. But I also think AI will pose a real threat to people who write for a living. People like you. A lot of writing about artists has already been replaced—at great cost savings—by interviews with artists. The next step is to eliminate the writer and just have AI come up with questions for the subject. Publications have access to all your writing already and could feed it into a large language model. I think a big surprise for people will be the sheer number of creative sector jobs that are at risk.

RK: If a large language model had scoured, for example, everything I’d ever written or published, could it at present serve as a moderator for a conversation, as I’m doing right now, except better?

JK: Not yet, but it seems likely by the end of the decade.

Will Arbery: Nice. [Laughs.]

RK: Yeah. Nice. What else can you say to that? [Laughter.] In your professional and creative circles, Will, have you already seen artificial intelligence start to make itself known?

WA: Well, it was one of the core issues at the center of the writers’ and actors’ strike in Hollywood this year. The studios’ initial reaction to the guild was basically: “We’re not going to give you any of the protections you’re asking for, but we will do an annual check-in about the progress of AI, an open forum to talk about how far it’s come,” which was a terrifying, bureaucratic non-answer. And it just really showed their ass in terms of how curious they are about what they can use it for and get out of it, how quickly and cheaply they can profit from it. Luckily, we were able to get some protections against it, but everyone just has this feeling of dread, I guess, like a ticking clock.

As a writer, I think about this: There are so many things that already feel as if they’re written by AI but have credited writers whom I’ve heard of. Maybe this is perversely empathetic, but I start to get interested in the question of consciousness, and whether there is going to be a voice calling out from inside that shit, a voice that is real, and does it have something to teach us? Could it in fact be better than a lot of the stuff that’s written right now? [Laughs.] I don’t know. The brain must go there.

JK: There’s this story that at his 40th birthday party, Elon Musk had a blowout argument about AI with Larry Page, one of the founders of Google, where Page accused Musk of being guilty of “speciesism”—prejudice in favor of the human species and human beings against future hyperintelligent machines that don’t exist yet. There are people in Silicon Valley who think we shouldn’t put guardrails on the development of AI because, in doing so, we’re discriminating against future AI, whose right to exist outweighs any potential threat to humanity.

It’s unclear when artificial general intelligence, AGI, will arrive, but I’ve heard there is a small—but nonetheless real—possibility that AGI will emerge out of the current work being done to improve large language models like ChatGPT.

RK: What does that mean?

JK: Artificial general intelligence means an AI with human-level intelligence or better. The engineers developing large language models don’t actually understand a lot of what’s going on under the hood of their models. There’s a possibility of some kind of awareness in there. If consciousness is an emergent property of this kind of intelligence, sentient AGI could be a side effect of this work. Artificial consciousness is distinct from artificial intelligence. Scientists still understand very little about consciousness—where it comes from and how it works. This makes engineering artificial consciousness more difficult.

WA: Years ago I read about the work of Swedish philosopher Nick Bostrom, and it haunted me, and I ended up writing a pilot called Parallel, Texas, inspired by his idea that if we are able to create artificial intelligence that thinks it’s conscious—that is, conscious within a simulation—then there’s a near mathematical certainty that we’re already living inside one. In other words, we are that thing we are scared of.

JK: What’s amazing is how many people in Silicon Valley already believe this. I wonder if they think it won’t matter if they ruin the world, because in their heart of hearts, it’s not real anyway. Which feels very religious, very Book of Revelations.

Will, I was interested in talking to you about your experiences in the writers’ room because I feel like there’s a window for making movies that could narrow or slam shut in the years ahead. AI could transform media in ways that the WGA isn’t even thinking about.

I could see a not-too-distant future where there is a kind of illegal AI YouTube—an ocean of infinite, flawless, high-resolution, AI-generated video fan fiction, where people can have exactly what they want. People will be telling their own stories—or endlessly rewriting the glut of stories that already exist. Why would you need paid writers or filmmakers to make or adapt Marvel comic books into movies when AI can generate personalized versions of Marvel movies in a few minutes? Or infinite versions of whatever story or content you might be in the mood for?

WA: I think you’re right.

RK: Will, do you feel that same sense of pressure about the window closing for filmmaking or even, I suppose, theater?

WA: Not about theater. I think theater is not going anywhere because it’s always just been bodies in a room. It requires a sharing of breath with the thing that’s happening. After whatever major civilizational collapse happens, if it happens, maybe expedited by AI, theater will probably immediately just continue because that’s what it is, the most basic form of telling each other’s stories, or at least using our bodies to tell each other’s stories. It’s how we survive. But I do feel the pressure with film, because of AI, but also because of my own mortality. It’s a dream I’ve had since I was a boy. I think: “I’ve loved movies as long as I can remember and I’ve always wanted to make one. Why the hell haven’t I done it yet?” That’s just basic human ambition at work, me thinking that I’m special. [Laughs.] What’s interesting to me about what Josh is saying is that AI could exist just to feed us the illusion of our own specialness over and over again, on a loop, in increasingly advanced forms. I write a lot about people with faith who believe we were created in the image of God and really need that idea in order to function. And so a lot of what I do revolves around that question: Are we special?

RK: I was going to ask you, Will, if you have a sense about how the idea of AI figures in for the people you grew up with, very conservative religious thinkers. Where does AI, or the possibility of machine consciousness, fit into their model of the soul, of belief?

“I was just at the Emmys, and they included the moon landing and the towers being hit on 9/11 in their montage of ‘unforgettable TV moments’ ... You can see why people are clawing at the walls of the cave, questioning everything, trying to get to what’s real.”—Will Arbery

WA: It reminds me of that William F. Buckley slogan for young conservatives: “Don’t immanentize the eschaton.” Which is basically a very fancy way of saying: “Don’t try to create heaven on earth. All you’ll do is speed up the apocalypse.” He rooted his conservatism in the belief that a blanket emphasis on progress is dangerous and can often be evil and destructive. A lot of the people that I grew up with and the sort of people I write about would say that AI is a tale as old as time. It’s the Tower of Babel. It’s man trying to play God. And maybe it’ll be the thing that leads to the emergence of the Antichrist and speeds up the doomed eschaton. [Laughs.]

RK: On the more immediate political front, Josh, you’ve talked about the loss of white-collar jobs or certain kinds of highly skilled jobs causing a big shift of the population to the political right, in the way that automation and globalization did for blue-collar jobs beginning several decades ago. Where do Silicon Valley and people like Elon Musk and Reid Hoffman and Peter Thiel, whose funding has accelerated artificial intelligence, fit into equations about right versus left?

JK: I think they’re all over the place on the political spectrum. Somebody like Peter Thiel is a kind of right-wing accelerationist—at least based on his public statements and his political philanthropy—who advocates for developing AI whatever the consequences. For him, all the people who are outmoded by it can just go die. There are people in Silicon Valley who genuinely believe that the benefits of AI will outweigh the danger from any consequences.

RK: For us as humans or as shareholders in Google?

JK: For the owners of Google. They want to live forever. And if AI can solve all of the medical problems that stand in the way of doing that and allow them to run off to Mars or wherever in the next sixty, seventy years—for them, that outweighs the political destruction and human misery that AI could unleash around the world.

WA: I’ve read a lot about Peter Thiel, and he’s hard to pin down because he can be both a conservative supporter and also semi-transhumanist, hastening the very thing that a lot of these people fear the most. It’s baffling. But at Google, there are probably a fair number of employees who genuinely believe that for all of our big, urgent questions, AI will help us get answers faster. There are already reports about AI leading to the discovery of new minerals in the earth, helping speed the science that will lead to clean energy, helping reverse the effects of climate change. Sort of: “No, let’s absolutely immanentize the eschaton,” which of course a lot of conservatives think is a fool’s errand because you’ll never have utopia on earth. The Garden of Eden was that. Original sin took that from us, so the real utopia is in the next life, and we will not achieve it on this earth. I think a company like Google thinks we can achieve it and that they’re the good guys.

RK: There’s the short story by Kafka in which he reinterprets the story of the Tower of Babel as a myth of the impossibility of progress. It’s not that God came in and changed everybody’s languages so they couldn’t work on the tower. It was that the people who began to build the tower never really got underway because they knew the next generation would have superior materials and skills and would just tear down what they made and so there was no urgency. The next generation felt the same way, and so on—a quite conservative take on human progress.

JK: Super conservative. The changes that we’re potentially looking at later in the 21st century are on the order of the agricultural revolution—a total revolution in our most fundamental modes of production. Personally, I think it would be great if we had a post-work, post-scarcity, abundance-based utopian society—like in Star Trek—but I don’t believe that Google or companies like it are going to bring us utopia.

WA: I agree, but why not?

JK: Silicon Valley has a neoliberal mythology that tells them they’re good people, doing good for the world, and that they should help others through voluntary charity, through philanthropy, rather than through paying taxes. The dominant ideology among the tech titans isn’t democratic. They’re not thinking through the consequences of what they’re doing based on an understanding of history. If they were, they would be advocating for different things and using their money to work on American politics in a different way. Right now, it seems like they’re just working really hard to destabilize an already very unstable situation.

AV: Josh, what has your reading and research told you about the more short-term political repercussions of the displacement of white-collar jobs in benefiting the right?

“I could see a not-too-distant future where there is a kind of illegal AI YouTube—an ocean of infinite, flawless, high-resolution, AI-generated video fan fiction, where people can have exactly what they want.”—Josh Kline

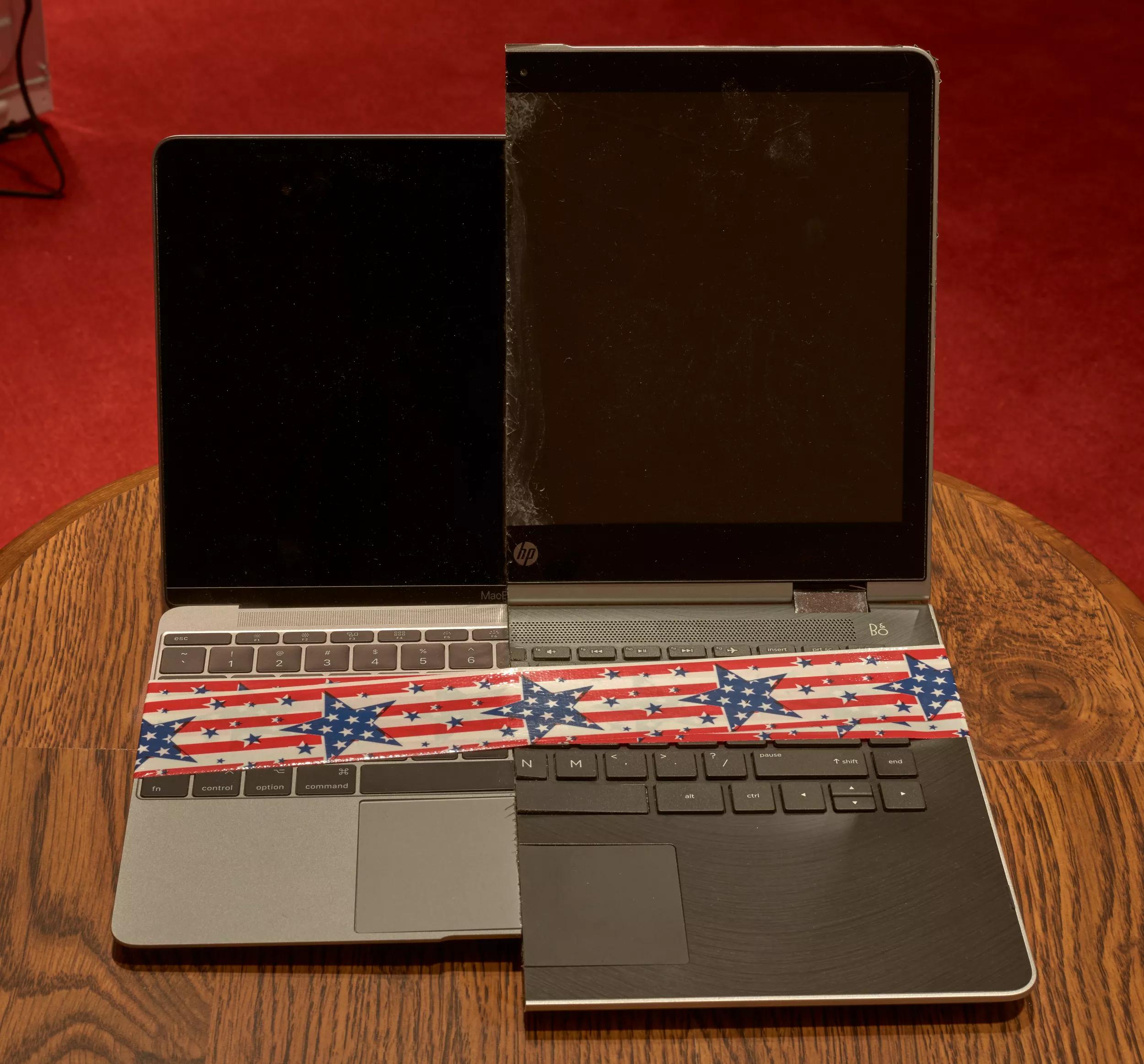

Josh Kline, Lies, 2017 (detail). HP laptop, Macbook, hardware, duct tape, custom wooden display, contact speaker, audio hardware and audio file, 37 3/8 × 21 1⁄4 × 20 5/8 in. (95 × 54 × 52.5 cm). Photo: Robert Glowacki. Courtesy the artist and Modern Art, London

JK: That there are many parallels between the early 21st century and the early 20th century. Two stand out in particular. One is the decline of the British Empire in the wake of World War I, when the British ruling class were unwilling to take money from the wealthy (from themselves, through taxes) to pay for their war. So—even though they were rich beyond imagining—they went into debt to America to pay for the war and lost their empire. It’s similar to what’s going on in America now. The U.S. has been involved in all sorts of wars and conflicts over the last twenty years, wars that its ruling elite don’t want to pay for, not to mention being too greedy to pay for a social safety net. Aggressive expansionist states like Nazi Germany, Imperial Japan and the Soviet Union took advantage of the political vacuum when Britain went into decline. There are a number of great powers today that see America in decline and are already trying to step into that vacuum.

The other parallel—in so many nations—is with countries like Germany during the Great Depression, where mass unemployment and destabilization among the middle classes led to the rise of fascism and the Nazis. If you take away the security of the middle class, they’re going to go looking for a strong man (or woman) to solve their problems and for someone to blame. It’s happening all over the world right now. Look at Argentina with the election of Milei. Or Meloni in Italy, or Wilders in the Netherlands.Will, I’m curious what you think is coming down the pipeline.

WA: When I look to the future, my eyes get fuzzy. I see a sort of chaos or a yearning for chaos. At least that’s an energy I’m picking up from a lot of people. I see an addiction to The Big Show. I think Josh is right. There is no understanding of history. I’m guilty of that, too. And I often feel complicit in the way the entertainment industry conditions people to just change the channel whenever they want, or flip to the next TikTok.

JK: I guess I’m pretty forgiving of the entertainment industry. For me, the issue is the gutting of America’s educational system. You have other countries that are saturated with American media, like the Scandinavian countries, for instance, but they have well-funded schools with a mission to equip citizens with basic critical thinking skills and some history—even if it’s not perfect. Meanwhile, America’s educational system has been gutted over the last fifty years by consecutive neoliberal governments.

WA: I guess I’m just talking about the general human desire to be distracted from what’s going on, and entertained. I was just at the Emmys, and they included the moon landing and the towers being hit on 9/11 in their montage of “unforgettable TV moments”—the rest of which were all scripted. You can see why people are clawing at the walls of the cave, questioning everything, trying to get to what’s real.

JK: That’s nuts about the Emmys montage. And it does feel like we’re being isolated into our own personalized social media caves. I don’t mean to be entirely gloomy. Concerning AI and what’s on the horizon politically, I think there is a very real pos- sibility for something more utopian than the society we live in, but there has to be a political will to seize that future. If there’s a safety net and a robust universal basic income, for instance, that would help with the transition into an automation-based society. This all starts with taxes. None of our real problems get solved and none of our dreams come true unless we start raising taxes on rich people, which I know is a great thing to be saying in Hauser & Wirth’s art magazine, but it’s true. [Laughter.]

AV: It is true. I’m just curious before we sign off, would either of you consider using AI in your own work, as artists and storytellers?

JK: I mean, everyone will. I’ve already tried. I asked ChatGPT to write dialogue for me for a script, but I couldn’t get it to stay in character. It’s just not smart enough to play my weird characters yet.

WA: If I were to use it, I would be transparent about it—like, “Here’s a section of this thing that is ChatGPT. Now let’s hear from it.” But right now, it seems like an annoying level of labor to try to make it make interesting art for me. So I guess I’ll just keep making art myself. [Laughs.]

–

Will Arbery is a playwright and screenwriter. His plays include Evanston Salt Costs Climbing (2022), Corsicana (2022), Plano (2019) and Heroes of the Fourth Turning (2019). He is a Pulitzer Prize finalist and the winner of a Whiting Award, a WGA Award, an OBIE Award, a Lucille Lortel Award and a New York Drama Critics’ Circle Award for Best Play. He wrote the libretto for Demons which is slated for production at the Met Opera. He was a writer for the final two seasons on the HBO television series Succession.

Josh Kline works in installation, video, sculpture and photography. In 2023, his work was the subject of a major survey at the Whitney Museum of American Art in New York. His solo exhibition, “Josh Kline: Climate Change,” is on view at the Museum of Contemporary Art, Los Angeles June 23, 2024 and continues through January 5, 2025. He lives and works in New York City.